Within the last few years there has been a mainstream trend of the idea of "Fake News" or in other terms "disinformation". Before we step into the topic it might be helpful to look at the definition of disinformation:

"Disinformation is false or misleading information that is spread deliberately to deceive. " - Wikipedia

This trend was even more underlined with the revelations of Cambridge Analytica where a private firm was used to create and run highly targeted and sophisticated disinformation campaigns on political voters through social media across different geographical regions. Now as we are experiencing the global Covid-19 crisis the acceleration of the 4th industrial revolution (communication and inter-connectivity), more and more people are active on the internet and social media. This in turn also increases the opportunity (financial or otherwise) of companies similar to Cambridge Analytica to actively run disinformation campaigns for their customers on this emerging new group of internet users.

How does disinformation relate to Cyber Threat Intelligence?

Most organizations that are currently setting up their Cyber Threat Intelligence (CTI) function are still in a relative immature stadium and are generally not facing challenges of disinformation contaminating of their intelligence sources (at least there is no widespread crisis as of yet in the community). But, there is a real risk that these disinformation campaigns will break out of the political domain and influence available Cyber Threat Intelligence sources from organizations in the future this is where the relation with Cyber Threat Intelligence Services comes up.

How does disinformation effect the quality to Cyber Threat Intelligence?

With these disinformation campaigns running (currently or in the future) in the wild there is a real risk of quality degradation of the major quality factors that a Cyber Threat intelligence product should posses. This is as an after affect of having bad or contaminated intelligence sources used to produce the intelligence products. The final intelligence product quality factors that are impacted by a disinformation campaigns are the following:

- Completeness - Expected comprehensiveness, intelligence can be complete even if optional data is missing. As long as the data meets the expectations of the consumer then the data is considered complete.

* The new emerging requirement mind be that existing disinformation narratives should be mentioned when reporting on certain cyber threats. - Objectiveness - The method of intelligence collection and analysis must ideally always come to the same intelligence product and should be politically neutral.

- Accurateness - The degree of the intelligence product resembling reality, disinformation might skew this towards falsehood.

- Contextualization - The context surrounding the intelligence product, it might be sometimes important to mention disinformation campaigns as context where a certain threat is related to.

It is also important to mention that a disinformation campaign can be a precursor to a cyber attack, this might help anticipate or even predict certain attacks to emerge on the cyber threat landscape.

The proposed solution: "Disinformation Analysis Service"

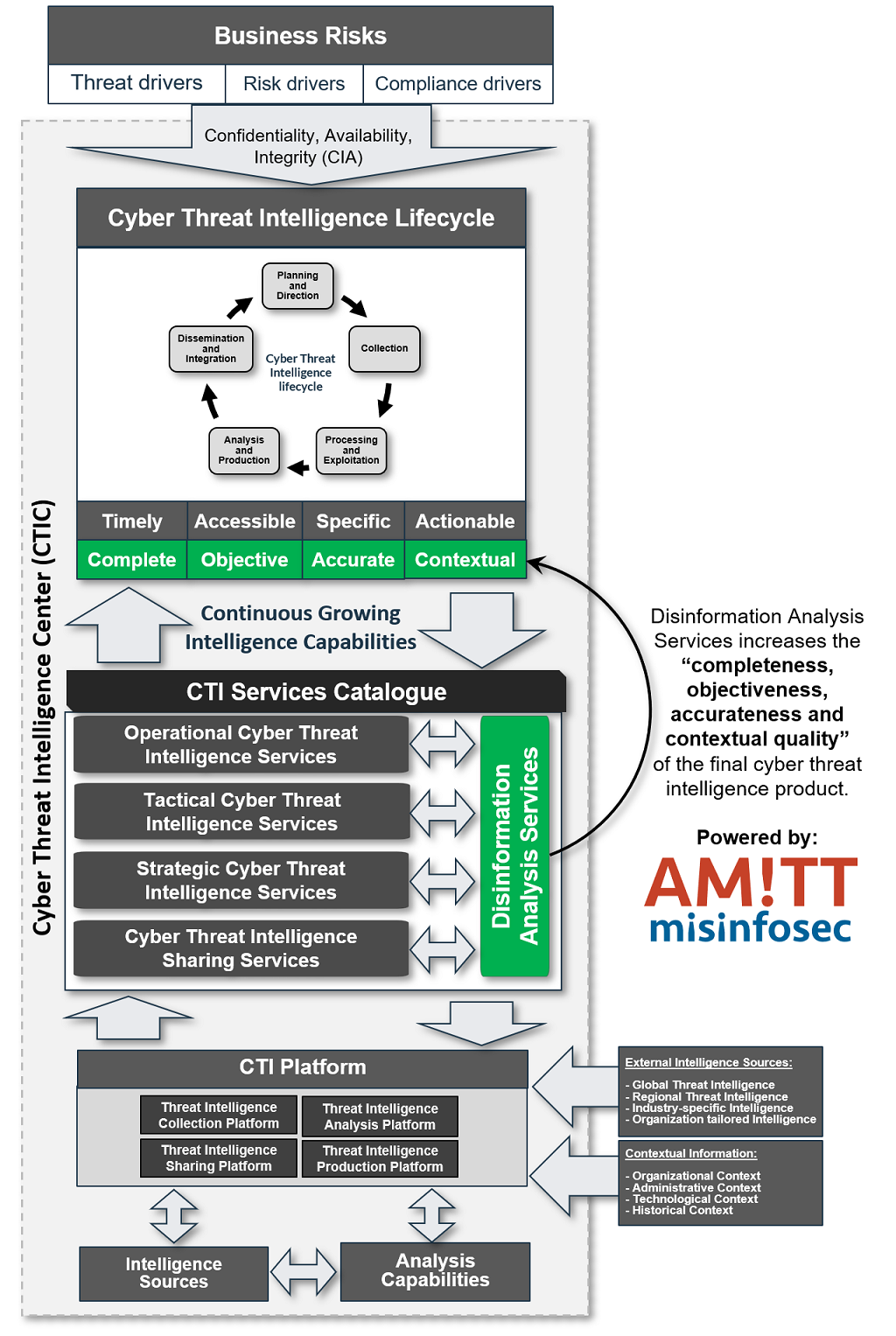

To be able to counter this quality degradation of the intelligence product it might help being aware of any active disinformation campaigns that are run throughout the global cyber threat landscape. I want to propose a solution to augment the existing Cyber Threat Intelligence (CTI) function with a supportive "Disinformation analysis Service" within a Cyber Threat Intelligence Center (CTIC). This will help augment the existing CTI Analysis processes with a "disinformation aware" dimension and helping push up the completeness, objectiveness, accurateness and contextualization of the final threat intelligence product.

To illustrate the relation between CTI Services, Quality factors and Disinformation analysis services I've created the following diagram:

How will the "Disinformation Analysis Service" work within the intelligence lifecycle?

To get more specific what a this service might entail we can look at the Cyber Threat Intelligence lifecycle.

- Planning and Direction - First things first: the requirement should be there from the organization or the emerging threat landscape to actively augment the intelligence product with possible disinformation campaigns.

- Collection - Source selection of organizations that actively track disinformation campaigns in the world, ideally across geographical regions to start actively collecting from.

- Processing and exploitation - Standardized methods of collections (like TAXII and STIX) should lead to automated collection and enrichment within the Threat Intelligence Platform of active or emerging disinformation campaigns.

- Analysis and production - Analyzing disinformation campaigns and seeing how they relate to the existing organization's threat landscape.

- Dissemination and integration - Communicating the relevant disinformation campaigns to the other Cyber Threat Intelligence Analysis functions within the Cyber Threat Intelligence Center (CTIC).

How can one model disinformation campaigns in a standardized format?

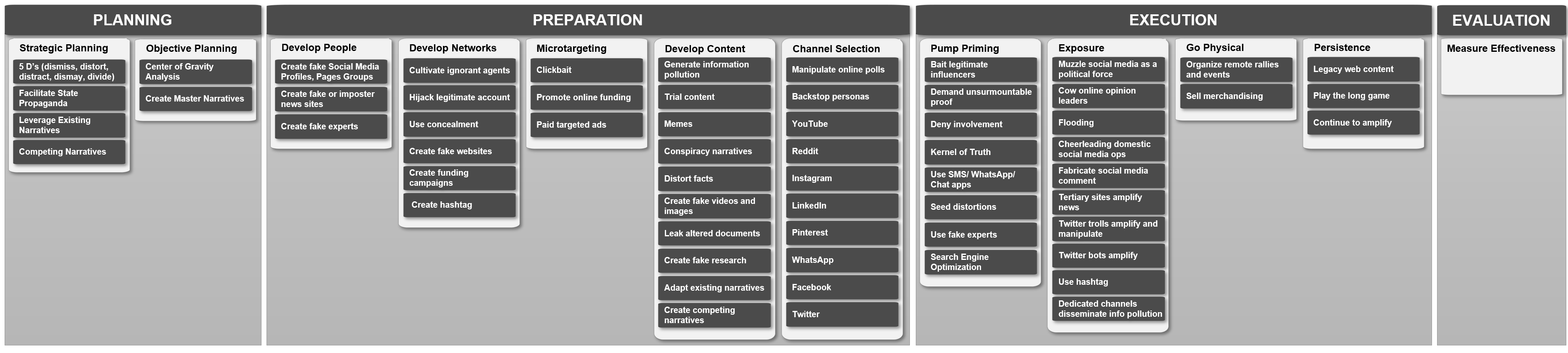

To be able to consume, produce and distribute Disinformation intelligence it needs to be normalized in a framework that is of similar nature like the MITRE ATT&CK framework. Introducing Credibility Coalition's AM!TT Framework:

AMITT (Adversarial Misinformation and Influence Tactics and Techniques) is a framework designed for describing and understanding disinformation incidents. AMITT is part of misinfosec - work on adapting information security (infosec) practices to help track and counter misinformation, and is designed as far as possible to fit existing infosec practices and tools. - AMITT Github Page

This framework has a similar structure as ATT&CK with phases, tactics, tasks and techniques in a single overview. In the following diagram you can see the these different phases in a detailed manner.

Additional information on every specific step can be found at: AMITT Github Page in their recent update they have stated they are working on a STIX templates so this type of information can be easily distributed among threat intelligence sharing partners.

Should "Disinformation Analysis Service" be part of a CTI Service Catalogue?

With the creation of the AM!TT Framework it definitely makes it easy to integrate this type of information in Threat Intelligence Platforms and helps push the completeness, objectiveness, accurateness and contextualization of the final threat intelligence product up. But currently it might be a bit too early to consider this type of service as most CTI functions are still in their "threat feed" stadium of maturity and widespread disinformation among Cyber Threat Intelligence sources has not yet have emerged. Nevertheless looking at the obvious trends within the world it will only be a matter of time until this Disinformation Analysis Service integration shift's from a "could have" to a "should have".